Have you ever asked a user of your product how they like it, and had them tell you it’s fantastic, super easy to use, and they love it?

I bet it made you feel great didn’t it?

Well… bad news… that kind of feedback sucks, and hearing it does nothing for you besides making you feel all warm and fuzzy inside.

In order to get feedback you can actually use to build a better product, you need to know the right questions to ask, and the right answers to look for.

Simply asking your users how they like your product is a waste of time because it will always get the same response.

A number of years ago, I was working as Lead UX Designer at one of the first startups I ever joined. We were a small team of 6 people, and I was the lone full time designer / UX guy.

We had a very close, personal relationship to the majority of users so getting user feedback was easy.

The problem? Almost everyone said same thing.

WE LOVE IT! IT’S SO GREAT! AMAZING! INCREDIBLE!

But their behaviour showed a different story. Many sections of the product remained untouched and barely used. It was clear that people were confused about what certain features were for, often using them for the wrong thing.

But when asked about it?

WE LOVE IT! IT’S AMAZING!

So why was this happening?

It the same reason almost everyone answers this way when asked “how do you like it?”

It’s because they feel compelled to say yes, since it’s obvious that’s the answer you want to hear.

For example, let’s say your kid, or friend’s kid, or any kid, runs up excitedly to show you their awful finger painting they did in class.

They look at you with those puppy dog eyes and say….

“I made it for you! Do you like it?”

“I hate it!! What a horrid pile of shit!” is clearly not the expected answer in this situation.

The child is now crying and you have cemented yourself as “emotionless monster” in the eyes of all around you.

The correct response is to praise the child then throw it away a day later (the drawing, not the child).

The point is, this is called asking a leading question, because the way the question is phrased leads the person in the direction of the expected answer.

If all you’re asking are these types of leading questions, you’re not getting accurate feedback from people about your product.

But don’t worry, hope is here! By the end of this article, you will know the exact types of feedback you should be collecting, and how to go about getting it without the use of leading questions or becoming an emotionless monster.

Now I’m going to cover 10 of the most important pieces of user feedback you should be collecting, how to collect it, and exactly why it’s so vital to the success of your product.

Psst… Want a better designed product? Improve your UX in 5 minutes or less by downloading my free eBook, 5 Minute UX Quick Fixes. Download the book here.

1 — How likely are they to recommend your product to a friend?

If this sounds familiar to you, that’s because it should be. It’s probably the most widely implemented “feedback collection” strategy used by digital products.

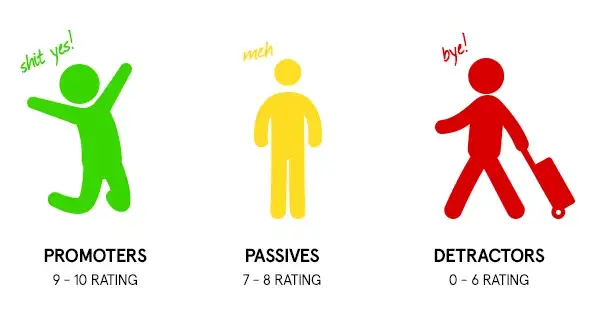

It’s called the Net Promoter Score® and it’s a great way to gauge exactly how satisfied a user is with your product.

Why is NPS Score So Important?

NPS Score is an important metric because research shows that people are only willing to share, or recommend a product, when they’re happy with the product themselves.

Why? Because recommending something means you’re putting your reputation on the line. If you tell a friend to use something and they end up hating it, they might never trust you again (especially after that whole finger painting thing).

I consider NPS a strong indicator of your user’s “happiness level”, and a good indication of how effective your UX and Product Design is.

On top of that, user’s are also asked why they gave the answer they did. This opens up a conversation between you and these users to further investigate why they might be unhappy.

How can you collect your NPS Score?

The method of collecting this bit of feedback isn’t overly complicated. You can simply send out a survey to your users asking the question:

“How likely are you to recommend this product to a friend?”

The answer is in the form of a number rating from 1–10.

After a user gives a rating, they are asked for an open text answer on what the reason is they gave that answer. This question is optional, since all you really need is the number.

Afterwards, users are separated into three groups:

- Promoters (9–10 rating)

- Passives (7–8 rating)

- Detractors (0–6 rating)

You calculate your score by subtracting the percentage of detractors from the percentage of promoters.

For an exact, step by step guide on quickly setting this system up for free, download my free NPS Score guidebook + video tutorial here.

2 — What bugs or problems are they running into?

In my experience, I find that some Founders and Product Managers are deathly afraid of shipping anything that is less than perfect.

Before they release a new feature, they want to test it again and again and again until they’ve ironed out every single bug.

It’s as if a user running into a bug is going to make the entire company fold right then and there due to sheer embarrassment.

The reality is, it’s almost impossible to track down every single bug before you release something, and trying to is an enormous waste of valuable time.

Why is knowing about software bugs so important?

The problem with constant product testing is that it costs a ton of time and money.

For most startups, time is the most precious resource they have. The more time you spend chasing bugs, the less time you have to collect valuable user feedback which will influence the satisfaction of your users.

Believe it or not, running into a bug isn’t a make or break type thing for a user. Being unable to figure out how to use and get value from your product however, is a huge make or break type thing.

How to uncover bugs faster in your product?

Make your users your product testers. Sound crazy right? But think about it. You have an entire ARMY of people using your product each and every day.

Hundreds, thousands, possibly hundreds of thousands of people could uncover 300 bugs in 3 days, where it would take your team 6 months to track them all down.

Giving your users the ability to quickly and accurately report a bug means you can have bugs tracked and fixed in lighting time.

I just stumbled across this great product called BugMuncher on Reddit the other day which gives users the ability to quickly screenshot a bug, and records the important stats along with it like operating system, browser type, version, etc.

Consider implementing this product, or a similar solution of your own, that allows user to quickly and easily report bugs.

I don’t mean a big text box where you ask them to describe what happened, I mean a simple way to report it with under 15 seconds, which doesn’t take them away from the page they’re currently on.

3 — How happy are they with your customer support?

Your customer support team is like the bridge between unsatisfied user and super satisfied user. Super satisfied users are more likely to turn into promoters of your product, meaning they will recommend it to others.

Promoters are good. You want promoters.

That’s why it’s extremely important to constantly be monitoring if people are happy with the support they receive.

Almost as equally as important is how you go about collecting this data. It needs to be quick and easy for someone to rate a customer support interaction.

Often times I’ll get a follow up email saying something like “please help us and rate the support you received…”

I never respond to these emails, simply because a new email appearing in my inbox just means another thing I need to deal with, and since I know I can skip it, I do.

How can you make it easy for users to rate their support experiences?

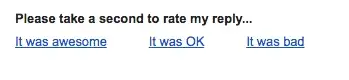

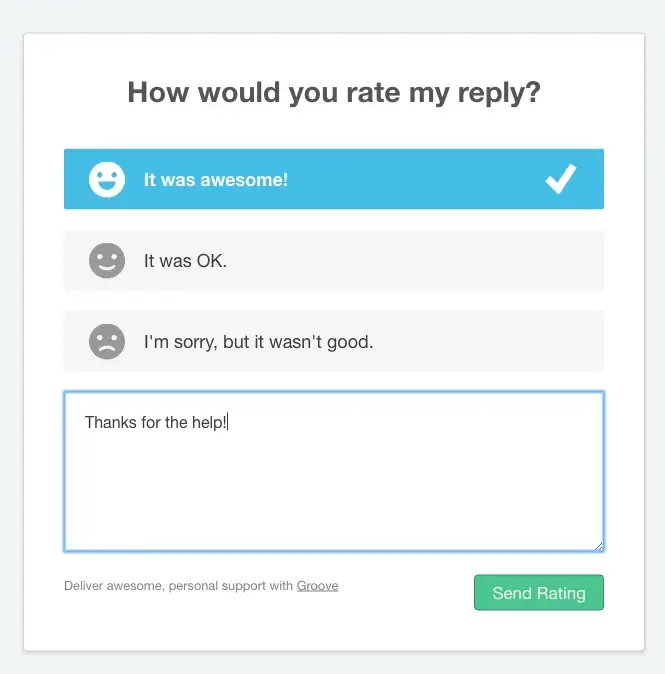

Groove uses one of the best solutions in my opinion, and that is giving users the ability to rate their support interactions at the bottom of each email.

You know those little “please rate our experience” things you see in email signature like this?

Those are a great way to give people the ability to rate their experience while at the same time keeping the effort required very low.

Clicking on any of these links brings the person to a pre-populated, one question survey with the option to explain more. This is a perfect setup.

Groove then calculates your user’s “satisfaction score” by subtracting the “not good” ratings from the “good rating”.

4 — Have they searched for alternatives since signing up?

If your product is even semi-successful, it means you’ve picked a niche which has a high amount of demand for what you’re offering.

This is good. It means people actually want your product, and you didn’t just invent the idea out of thin air.

But, this also means that you’re almost guaranteed to have a number of competitors who have a similar, if not identical product to yours.

This is also good. Don’t forget, competition means demand.

Much like any other traditional business, your users always have the option to switch to a competitor should their satisfaction level start to decrease.

Sometimes, users will “flirt” with the idea of switching without actually taking the plunge. If you can catch these people in time, chances are you can convince them to stay.

Why is this so important?

Collecting this feedback is important because it presents the perfect opportunity to uncover major problems with your product that are so severe they are causing you to lose a user.

It’s highly unlikely a user who has been searching for alternatives is going to tell you… “Everything is great! I love it!”

A user at this stage is the perfect person to collect feedback from.

If you can fix every single one of these problems, it means you can greatly reduce your churn rate (the amount of users who leave and never return) and keep more users coming back month after month.

How to find out if users have been searching for alternatives?

Simply ask them. There’s a number of ways your can do it, such as by sending out a survey through email, or using a product like Intercom to ask a question right in the product interface.

The important part is you ask them 2 questions:

- Have you searched for an alternative since you joined?

- If so, what were you looking for in a competitor’s product that we don’t have?

Why not simply ask them the reason why they’re looking at competitors? Because it’s just not direct of enough of a question. Asking big questions with indirect answers will scare people away who feel tried by the idea of having to answer in such detail.

These open ended answers can be a gold mine of information you had never considered. Perhaps your prices are too high? Maybe your product is too slow, too old, or doesn’t have a specific feature they’re looking for?

Consider following up with these people on a live Skype call to dive further into their problems and possible solutions that could get them to stay.

Just keep in mind that listening to a user and doing what a user says are completely different things. Don’t do what users say they want, instead listen and then give them what they need.

5 — What are their results from using your product?

People signed up for your product because of one single reason… you promised them it was going to improve their lives in one way or another.

Either by saving them time, money, stress, effort or increasing their happiness.

They signed up because of exactly what you promised them in the headline of your highly converting startup landing page you recently implemented.

If your product has done its job properly, after a certain amount of time, their lives should be better.

Just how much better is exactly what you want to learn from collecting this type of feedback.

Why are case studies so important?

Success stories from users are going to help you out in 2 ways:

- Uncovering benefits of your product you might not have realized.

- Providing you with a huge boost to your landing page conversions by offering results oriented testimonials.

In landing page design, a big part of the battle in turning a visitor into a user is first making them understand the benefits of using the product, then convincing them that you can actually deliver what you’re promising.

That’s where user success stories come in.

Uncovering new benefits that users experience will give you an opportunity to write more effective copy on your landing page, or better yet, attract an entirely new set of users to your product.

As for convincing them you can actually deliver? That’s where testimonials come in.

Featuring a story from a user that details the exact results they got is going to help increase the trust factor for people who are on the fence about signing up.

If you’d like to learn more about landing page design, sign up for my free email course here which will teach you the 10 steps to designing an effective startup landing page.

How can you collect successful user case studies?

Start this process by pinpointing power users of your product. People who use it far more than others, and who have invested a lot of money and time into it.

This will be a good indicator that they’re getting a large amount of value and great results.

Next, reach out to these people individually, through email, and ask if they’d be willing to share their story.

If they say yes, follow up with them. Prepare a list of questions you need answers for, but try not to structure it like an interview.

Don’t forget to get permission from them to feature them in your marketing.

6 — How satisfied are they?

Remember at the start of this article where you learned about NPS Score and asking a user how likely they were to recommend your product to a friend?

While I think this is a fantastic indicator of user satisfaction, it doesn’t hurt to have a backup. Why? Because not everyone can accurately answer the “how likely are you to recommend this product…” question.

To some, it might be the case that they simply don’t know anyone who would be interested. Others might just be against recommending anything at all.

This is why asking a user straight up “how satisfied are you?” can also be a great indicator of how well your product is working.

Why is user satisfaction so important?

Simply put, users don’t keep using things they don’t like.

Chances are, if a large number of users have told you that they’ve been searching for alternatives to your product, it also means that your “user satisfaction” score is going to be low.

Keeping your users satisfied is the most important part of your product design. It’s the ultimate goal of every feature you add (or don’t add), and every redesign you implement.

How to find out if your users are satisfied?

Jakob Nielsen suggests simply asking them to answer this question by choosing a number on a scale of 1–7.

“Averaging the scores across users gives us an average satisfaction measure.”

Says Jakob, the Godfather of UX.

This could be done by sending an email survey using Survey Monkey, or using a product like Intercom to ask them directly in the product interface.

Don’t forget to wait until they’ve had a chance to explore your product before asking this question. A brand new user won’t have enough experience to give you an accurate response.

7 — What do they think about a newly released feature?

Here’s the process I’ve noticed a lot of digital startups follow:

- Spend hours building initial product.

- Release.

- Immediately start thinking of NEW ideas to include in product.

- Spend hours building and releasing new features.

- Get disappointed that people aren’t flocking to the product.

- Decide it must be because there isn’t enough features.

- Release 4,000 new features.

- Attempt to deal with bugs, usability issues, updates, and customer support problems that come along with every single new feature.

- Run out of money.

Is it obvious how much I hate adding features?

I hate them.

Next to how much I hate the default setting of releasing features instead of focusing on user feedback, is what happens once a feature is released… NOTHING.

After releasing a new feature, you must follow it up with a process that validates the existence of the feature.

If no one uses it, and no one wants it, it shouldn’t exist in the product. That’s why we want to know everything we can about how user’s are interacting with it.

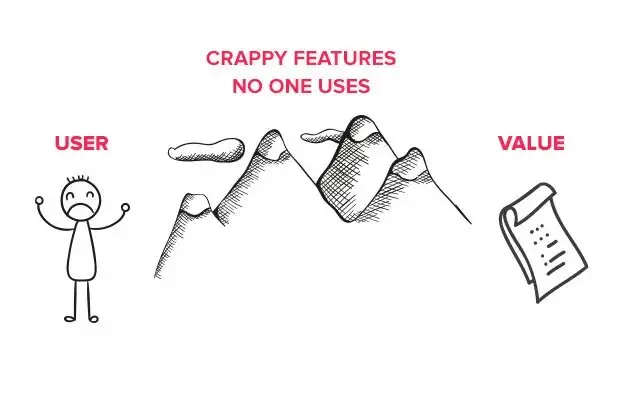

Why is feature feedback so important?

Bloated, unused features dilute the value of your product, and they add to poor usability.

The more things you add to your product which don’t add value for a user just become a big pain in the ass obstacle they need to navigate around in order to get to that nugget of value that still exists.

Collecting feedback on how well users understand, or respond, to a new feature is a vital part of this feature validation process.

How to find out if/how a feature is being used?

The quickest and easiest way to do this is by looking at your analytics. Are people navigating to that page of your product or not?

This will give you a small idea of how much people are navigating to the feature, but it won’t tell you if they understand how to use it, or what it’s even for.

The way to achieve this is by performing user testing.

Set up a user test and give your users a set of tasks to complete. Include the new feature as part of one of those tasks, and see if they’re able to find it, and use it, on their own.

Analyze their behaviour, and encourage them to think out loud as they navigate.

Don’t forget that testing anything over 5 people can be a waste of time in most situations.

Check out my guide to user testing for a complete breakdown of how to perform your first user test quickly and easily.

8 — How much effort is it taking them to perform specific actions?

This is the exact opposite of asking a high level question like “are you satisfied?”

Instead, this question focuses on the small detail of how hard it was to do one single thing.

For example, let’s say a user just went through the process of integrating a payment system, like Stripe, with their account.

When the process finishes, a small notification, directly within the product, would ask them to rate the amount of effort it took them to perform the task, rated on a scale of 1–10.

Why is user effort so important?

The goal of building a usable product is to minimize the amount of effort it takes for users to reach their goals.

That’s why it’s important to continue to test, iterate, and improve upon the various features you’ve built.

By asking a user to rate how difficult a task was, it gives you the opportunity to focus on features that users are currently rating as “very difficult” instead of focusing on building new features, or trying to fix things that aren’t really a problem.

It’s kind of like the way users can rate support emails, except it’s directly within your product.

How can you find out how much effort tasks are taking your users?

Keeping track of exactly how much effort it takes users to perform all of the different tasks on your product is time consuming.

You need to be running constant user surveys, user tests, observing behaviours on each task, and then combining a ton of test data to determine what features are requiring the most effort.

This is called task level satisfaction measurements.

The result of executing these properly is a set of analytics that show you exactly what areas of your product design need attention first, and which areas are having the biggest impact on your user’s experience.

If you want to learn more about the types of task level satisfaction measurements, check out this article on ConversionXL.

9 — How often are they using your product?

What is the average amount someone uses your product, and what is the reason that is the average?

Every day? Every week? Once a year?

Is there any reason it seems unusually low or high?

Why is the amount a user logs in so important?

Increasing the frequency your product is being used by a user may be extremely important, or it might not be that important to you at all.

It really depends on the goals of your product.

For a product like Facebook, the more frequently the person visits their site, and the longer they stay on the site, the better it is. Why? Because they’re exposed to more advertising.

But for someone running a business to business targeted product, like web hosting for example, this might not matter as much.

Regardless, it’s important to have a benchmark in order for you to be alerted if average use starts to drop, or increase.

How can you find out how much people are using your product?

First, the easier approach is to simply check your analytics. This won’t give you much beyond a daily number, but it’s a start.

In order to go deeper, check out a product like Intercom (which I’ve already referenced 100 time now). It will automatically segment your users into lists based on their behaviour.

This allows you to communicate with groups of users in order to find out why they’re falling into the “slipping away” category.

![]()

Intercom automatically lists users who may be slipping away.

Speaking to users who are starting to “slip” will enable you to learn about specific problems users have with your product you may have never considered before.

10 — What are they searching for and why?

Do you have a support FAQ? A site wide search function? Maybe even support guide analytics?

Have you ever look at what people are searching for?

Search boxes are gold mines into uncovering usability issues you might not be aware of that are destroying the user’s experience.

Why are search analytics important?

In User Experience Design, in order to make a product more user friendly, we perform tests with a live user and observe their behaviour.

But… how do we decide what to test?

This is more difficult. Testing your entire product just to uncover problems can be expensive, time consuming, and not always as insightful as you want.

But knowing about a “problem area” that needs iteration and testing is a huge advantage. That’s where search analytics come in.

Users tend you use the search function as a “scapegoat” when they get confused or lost while attempting to perform a task.

This means any phrase that’s searched for over and over is a direct indication of a widespread usability issue.

Once you know the problem, go out and recruit 5 users to test and watch how how well they can perform. This feedback should give you a clearer picture of why people are all searching the same thing.

How can you collect search analytics?

Unfortunately, this isn’t something as simple as sending a survey out to your users and asking them a question.

You will need to set up your own product to track search phrases.

If that’s outside your current scope, consider using a 3rd party which you can install to make search tracking a bit easier.

I recommend checking out SwiftType, which I have personally used with a great deal of success.

On top of that, you should also be sure to have a properly design search function (and search engine result page).

Collecting and implementing user feedback in the only way to successfully improve your product

But simply asking a user “hey how much do you like this?” is a great way to feel good about yourself without actually learning anything important.

This post should have given you some insight into the types of feedback you should be collecting, and they way that feedback will inform your decisions on how to build a better product.

To get started right now, download my free guide using the form below to easily measuring your product’s NPS score.

This will give you fantastic insight into just how satisfied your users are with what you’ve build, and what the exact reasons are for those who aren’t.

If you download and follow my guide, you could have your results in less than a week, for free.